A Florida homicide case has drawn national attention after prosecutors said the man accused of killing two University of South Florida doctoral students allegedly searched online for ways to hide a body, including questions about whether remains placed in a garbage bag and dumpster could be detected.

The case centers on Hisham Abugharaibeh, 26, who has been charged after authorities said they found the remains of Zamil Limon on the Howard Frankland Bridge. Prosecutors also believe Nahida Bristy is dead, though she remains missing as of the latest public reporting. Investigators have also recovered additional human remains from Tampa Bay, but authorities have not yet publicly identified them.

What authorities say happened

According to reporting from NBC News and ABC News, investigators said they found personal belongings belonging to Limon in a compactor dumpster at the apartment complex tied to the suspect. Authorities also said DNA-linked material from both victims and blood evidence were found inside the apartment.

Prosecutors allege Abugharaibeh gave conflicting statements about his whereabouts around the time the students disappeared. An autopsy on Limon found multiple sharp force injuries, according to public reporting. The suspect is being held without bond, and officials have not publicly identified a motive.

A case that also reflects a broader tech-era anxiety

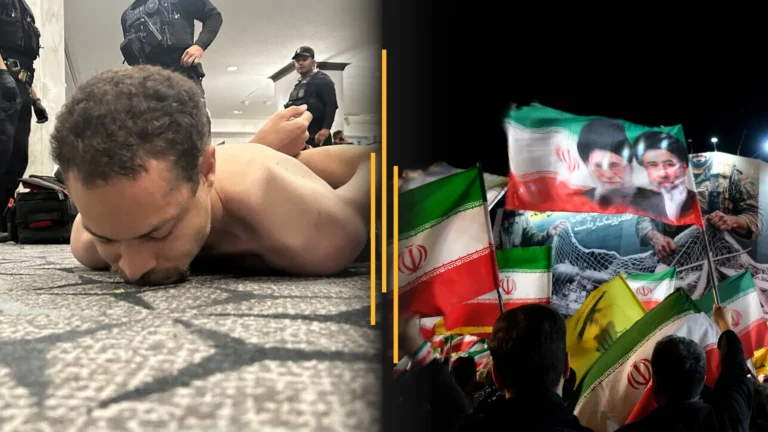

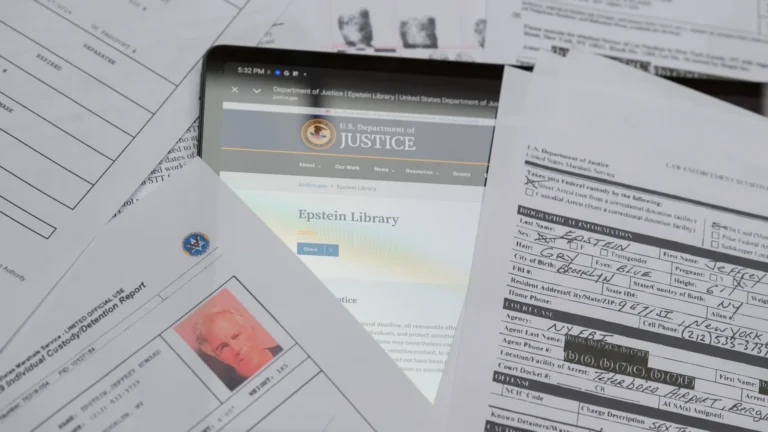

One reason this case has spread so quickly is the allegation that the suspect asked ChatGPT questions about body disposal and detection. That detail has intensified public discussion about how generative AI tools can be misused, even though law enforcement and prosecutors in this case are treating those alleged searches as part of a wider body of evidence, not as the cause of the crime itself.

The broader policy debate is still evolving. Companies developing AI systems have spent the last two years tightening safeguards designed to block requests involving violent wrongdoing, illegal activity, or evasion of law enforcement. OpenAI has published safety and usage policies that restrict harmful assistance, while governments in the U.S. and Europe continue debating how AI systems should be governed when users attempt to weaponize them or extract dangerous instructions.

Recent reporting and policy documents show that AI governance is becoming a bigger story far beyond this individual case. The White House has continued to frame AI safety, security, and accountability as a national priority in its public guidance and executive-era policy materials, while the European Union has moved forward with the AI Act, one of the most significant regulatory frameworks yet for advanced AI systems. Meanwhile, developers including OpenAI and other major labs have updated use policies to limit assistance that could facilitate violent crimes.

The latest Tech story: AI regulation and safety standards are moving from theory to enforcement

Beyond the courtroom detail in Florida, the latest major development in the tech category is that AI oversight is no longer a vague future issue. It is becoming operational.

In Europe, the AI Act is advancing implementation obligations that classify some uses of AI by risk tier, with stricter requirements for systems that could affect safety, rights, or public trust. According to the EU’s official framework trackers and policy summaries, providers of general-purpose AI models face increasing scrutiny over transparency, documentation, and risk management. You can review the legislation overview through the EU AI Act tracker.

In the United States, federal agencies have continued publishing guidance on AI security, procurement, and use in public systems. The White House Office of Science and Technology Policy has maintained its AI Bill of Rights framework as a reference point for safe automated systems, and the National Institute of Standards and Technology has expanded adoption of its AI Risk Management Framework, which many companies now use as a practical baseline for testing, monitoring, and governance.

At the company level, major AI firms are also under pressure to prove their safety controls are real rather than rhetorical. OpenAI’s public policy pages, Google’s AI principles, and Anthropic’s safety materials all point to the same shift: model developers are trying to show regulators and the public that guardrails, refusal behavior, and system monitoring can reduce misuse. But high-profile criminal cases keep testing that claim in the real world, especially when alleged users attempt to phrase harmful questions indirectly or exploit system gaps.

Why this matters now

The Florida case is, first and foremost, a human tragedy involving two doctoral students and a grieving community. But it is also a reminder that modern criminal investigations increasingly intersect with digital behavior: search histories, app data, AI chats, geolocation records, and platform logs are now part of how prosecutors build timelines and narratives.

That does not mean AI tools are uniquely responsible for violent acts. People committed crimes long before chatbots existed. But the case underscores why governments and tech firms are racing to answer difficult questions that once felt abstract: What should an AI system refuse to answer? How should harmful prompts be logged or escalated? What responsibility does a platform have when a user appears to be seeking operational guidance for a crime?

The next phase of the AI debate is likely to be less about flashy product launches and more about accountability. Regulators are moving. Safety frameworks are maturing. And cases like this one are likely to be cited in future arguments over whether current protections are enough.