Meta is rolling out a new parental supervision feature that lets parents see the topics their child has discussed with Meta AI, a move that reflects mounting pressure on technology companies to make generative AI tools safer for younger users.

What Meta announced

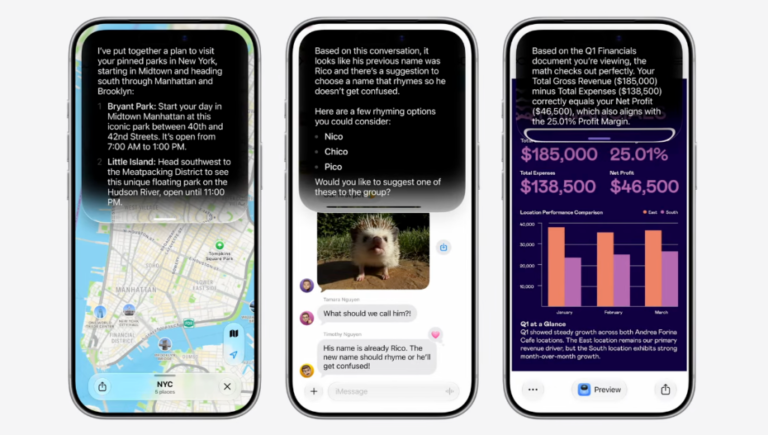

According to TechCrunch, Meta will now allow parents to review the topics their child discussed with Meta AI rather than the exact conversation transcript. The company says these topics can include areas such as school, entertainment, lifestyle, travel, writing, and health and wellbeing. The distinction matters: Meta is trying to offer more visibility to guardians without fully exposing every message a teenager sends.

The feature fits into Meta’s broader push to present itself as a company taking age-appropriate AI use more seriously. In recent years, social platforms have faced criticism from regulators, parents, and child-safety advocates over how recommendation systems, messaging tools, and now AI chat experiences affect minors.

The bigger picture in AI safety for minors

Meta’s update arrives at a time when the wider tech industry is under pressure to show that AI systems can be deployed responsibly. Companies including OpenAI, Google, and Microsoft have all emphasized safeguards, transparency, and user controls as generative AI tools become more deeply embedded in everyday products.

That urgency has also been reinforced by policymakers. The U.S. Federal Trade Commission has repeatedly warned platforms about privacy and youth protection, while lawmakers in multiple jurisdictions continue debating stricter online safety rules for minors. In Europe, the Digital Services Act has increased scrutiny of how large digital platforms assess and mitigate risks, including those involving younger users.

Why this feature matters

On the surface, the update may seem modest. Parents are not being given full chat logs, only a view into the types of subjects their child has discussed. But that design choice is likely intentional. It attempts to balance three competing priorities:

- Safety: giving guardians a clearer idea of whether a child is using AI for harmless school questions or drifting into more sensitive territory.

- Privacy: preserving some level of independence for teens who may not want every exchange read word for word.

- Trust: showing regulators and families that Meta is not treating youth AI adoption as an afterthought.

This balancing act is becoming one of the defining product challenges of the AI era. If platforms provide too little oversight, they risk enabling harm. If they provide too much surveillance, they risk undermining user trust and autonomy. Meta’s topic-level disclosure system appears to be an attempt to occupy the middle ground.

The industry trend: controls are becoming a product requirement

Across consumer technology, parental controls are no longer a side menu item. They are increasingly central to product design. Apple has continued expanding family safety features through tools available on Screen Time and parental controls. Google has done the same with Family Link. As AI assistants move into search, messaging, education, and entertainment, similar controls are becoming expected rather than optional.

That is especially true because AI interactions can feel more intimate than traditional social media use. A child may ask an AI assistant for homework help, emotional advice, creative writing prompts, or explanations about sensitive issues. Even when those interactions are benign, many parents want at least a high-level understanding of how these tools are being used.

What to watch next

The next question is whether topic visibility will satisfy critics or merely open the door to demands for stronger intervention tools. Parents may eventually want alerts for potentially risky categories, time-use summaries, or more control over whether AI features are available at all. Regulators, meanwhile, may ask companies to prove that safeguards are effective rather than simply well-marketed.

Meta’s move also signals something larger: AI products are entering the same accountability phase that social media did years ago, only faster. This time, the debate is not just about what young users post publicly. It is also about what they ask privately, what the system recommends in return, and how much of that loop adults should be able to monitor.

Bottom line

Meta’s new parental visibility feature is a small but meaningful sign of where the tech industry is headed. AI tools for teens can no longer be launched with a generic safety promise and left at that. Parents, regulators, and users increasingly expect clear guardrails, practical oversight, and product designs that recognize minors use these systems differently from adults.

For Meta, the update is both a safeguard and a signal: the race to build consumer AI is now inseparable from the race to make it accountable.

Sources:

TechCrunch – Meta will now allow parents to see the topics their child discussed with Meta AI

Meta

Federal Trade Commission

European Commission – Digital Services Act

Google Family Link

Apple Screen Time and parental controls